The overall OpenJDK project hosts numerous projects and groups related to Java SE technologies. This guide primarily discusses how to develop for specific OpenJDK projects corresponding to full Java SE platforms, such as JDK 7 and OpenJDK 6. Those interested in participating should become familiar with the contribution process.

The code base that would become OpenJDK 6 and 7 had over a decade of history and over six million lines of code before the sources of JDK 7 build 11 were republished under the OpenJDK banner and licensed predominantly under the GPLv2 with classpath exception on May 8, 2007. While this event was a watershed for the code base, what's past is prologue and OpenJDK development is a continuation of JDK development, but in an expanded context and with more open participation. Therefore, OpenJDK development continues to be informed by the previous institutional knowledge and concerns of JDK development from before the code base was open sourced. These inherited sensibilities are reflected in this guide in discussion of past situations and practices, not all of which yet have direct analogs in OpenJDK.

The overarching and long-standing motto for doing development in the JDK is "Do the right thing." The purpose of this guide is to help you, an OpenJDK developer, to discern what the right thing is in different situations. If the right course of action remains unclear, asking a more experienced developer or seeking guidance on an appropriate mailing list is a good approach to making progress. This guide describes norms for development; those with sufficient cause and authority can deviate from these norms. This guide concerns general OpenJDK development and is applicable to multiple releases. Documentation for a particular release should be consulted for logistics and details of working in that release. This guide discusses why something should (or should not) be done, say, adding a new package. The release-specific documentation will describe how a task should be done in that release, such as any needed build changes to add a new package.

The JDK is used directly by millions of developers and the JDK is installed on hundreds of millions of servers and desktops, including growing installations of OpenJDK as part of Linux distributions. Therefore, developing changes in the JDK can be very powerful, but must be done responsibly.

JDK releases vary greatly in size and goals. The largest JDK releases are platform releases corresponding to a new version of the platform and specification. A much smaller kind of release just addresses a coordinated set of security bugs. In decreasing size, the three main kinds of JDK releases are:

Platform

Maintenance

Update

A basic difference between platform releases, like JDK 7, and other kinds of releases is that platform changes have ability to change the platform API and evolve the language. Platform releases are large in scale; many new APIs are added and thousands of bug fixes and enhancements are included. The JDK 6 update releases are representative of update releases; the same platform specification is implemented, Java SE 6 in this case, and there are typically dozens to a few hundred bug fixes and enhancements in a release (6 update train release notes). Like update releases, maintenance releases implement the same base specification as a previous platform release, such as JDK 1.4.1 and JDK 1.4.2 both being additional implementations of J2SE 1.4, but they have more bug fixes than an update release, on the order of one thousand to two thousand changes (JDK 1.4.2 release notes). While maintenance releases have not been formally issued since JDK 1.4.2, the changes in 6u10 were more on par with a maintenance release rather than a regular update release. Successive builds of OpenJDK 6 are akin to small update releases since they all implement the same platform specification, Java SE 6.

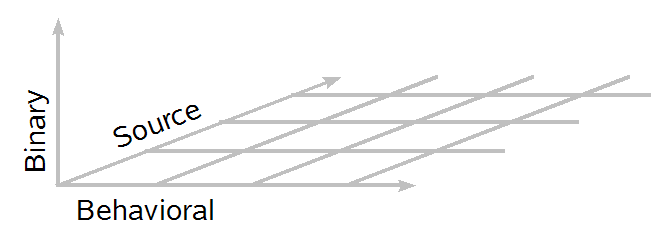

There are three primary kinds of compatibility of concern when evolving the JDK, source, binary, and behavioral. These can be visualized as defining a three dimensional space:

The farther away a point is from the origin, the more incompatible a change is; the origin itself represents perfect compatibility (no changes). A more nuanced diagram would separate positive and negative compatibility, that is, distinguish between keeping things that work working versus keeping things that don't work not working. In this guide the diagrams will just informally indicate the magnitude of the compatibility impact of a change.

The general evolution policy for Java SE APIs implemented in OpenJDK is:

Don't break binary compatibility (as defined in the Java Language Specification).

Avoid introducing source incompatibilities.

Manage behavioral compatibility changes.

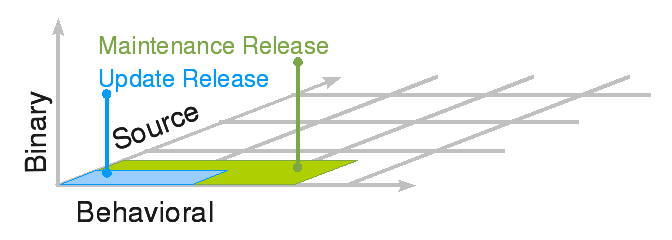

Guidelines for evolving components other than Java SE APIs are discussed later. While these API policies hold for all three kinds of releases, the allowable compatibility regions differ for different kinds of releases. For update and maintenance releases, the reference point to measure compatibility against is an earlier implementation of the same platform specification, such as the initial reference implementation of the platform or an earlier update release.

Since binary incompatible changes are not allowed, the acceptable compatibility region for update and maintenance releases is confined to the (Behavioral × Source) plane, with more latitude on the behavioral axis. For update releases, a limited amount of behavioral change is acceptable, where behavioral change is broadly considered to be any observable aspect of the platform. While client programs should only rely on specified interfaces, they can often accidentally rely on implementation details of the release's behavior so update releases limit the overall change in behavior. Some minor changes affecting source compatibly can occur in an update release; for example, the version of an endorsed standard or standalone technology included in the release can be upgraded. The JAX-WS component was upgraded from 2.0 to 2.1 in 6u4 and OpenJDK 6 b06 (6535162). Such upgrades should generally preserve the meaning of existing programs that compile and possibly allow new programs to compile. The main compatibility effect should be that the negative compatibility region may get smaller; programs that "don't work" or "don't compile" can become programs that "work" or "compile." Maintenance releases are generally similar, but more behavioral change is allowed and expected since there are a greater number of bug fixes and enhancements.

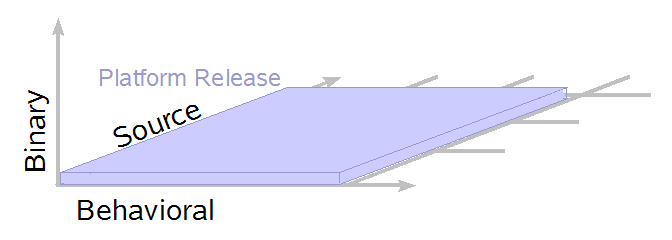

The compatibility reference point for a platform release is an

implementation of the previous platform specification.

Compared to the previous platform specification, a platform release

can add APIs and language features that impact source compatibility

(new keywords, etc.) and the implementation can have many changes in

behavior (such as changing the iteration order of

HashMap).

In exceptional circumstances, there is the possibility of a sliver of

binary incompatibility, such as to address a security issues in a

rarely-used corner of the platform, but the central policy of

preserving binary compatibility holds for platform releases as well.

Comparing one build of an in-progress platform release to another,

there may be large changes in binary compatibility before a

new API is finalized.

As a matter of policy, certain kinds of source incompatibilities will

not be introduced into the platform anymore.

For example, the Java language in JDK 7 will have no new keywords that

invalidate existing sources; instead of a full new keyword, JSR 294 is making

"module" a restricted

keyword whose use as a keyword versus an identifier will be

disambiguated by the compiler.

When evolving the JDK, compatibility concerns are taken very seriously. However, different standards are applied to evolving various aspects of the platform. From a certain point of view, it is true that any observable difference could potentially cause some unknown application to break. Indeed, just changing the reported version number is incompatible in this sense because, for example, a JNLP file can refuse to run an application on later versions of the platform. Therefore, since making no changes at all is clearly not a viable policy for evolving the platform, changes need to be evaluated against and managed according to a variety of compatibility contracts.

For Java programs, there are three main categories of compatibility:

Source: Source compatibility concerns translating Java source code into class files.

Binary: Binary compatibility is defined in The Java Language Specification as preserving the ability to link without error.

Behavioral: Behavioral compatibility includes the semantics of the code that is executed at runtime.

Note that non-source compatibility is sometimes colloquially referred to as "binary compatibility." Such usage is incorrect since the Java Language Specification (JLS) spends an entire chapter precisely defining the term binary compatibility; often behavioral compatibility is the intended notion instead.

Other kinds of compatibility include serial compatibility for Serializable types and migration compatibility. Migration compatibility was a constraint on how generics were added to the platform; libraries and their clients had to be able to be generified independently while preserving the ability of code to be compiled and run.

The basic challenge of compatibility is judging if the benefits of a change outweigh the possibly negative consequences (if any) to existing software and systems impacted by the change. In a closed-world scenario where all the clients of an API are known and can in principle be simultaneously changed, introducing "incompatible" changes is just the small matter of being able to coordinate the engineering necessary to make the change. In contrast, for APIs that are used as widely as the JDK, rigorously finding all the possible programs impacted by an incompatible change is impractical. So evolving such APIs in this environment is quite constrained by comparison.

Generally, we will consider whether a program P is compatible in some fashion (or not) with respect to two versions of a library L1 and L2 that differ in some way. (We will not consider the compatibility impact of such changes to independent implementers of L.) Sometimes only a particular program is of interest; is the change from L1 to L2 compatible with this program? When evaluating how the platform should evolve, a broader consideration of the programs of concern is used. For example, does the change from L1 to L2 cause a problem for any program that currently exists? If so, what fraction of existing programs is affected? Finally, the broadest consideration is does the change affect any program that could exist? Often once a platform version is released, the latter two notions are similar because imperfect knowledge about the set of actual programs means it can be more tractable to consider the worst possible outcome for any potential program rather than estimate the impact over actual programs. Stated more formally, depending on the change being considered, judging the change based on the worst possible outcome for any program is more appropriate than judging based on some other kind of norm of the disruption over the space of known programs.

Generally each kind of compatibility has both positive and negative aspects; that is, the positive aspect of keeping things that "work" working and the negative aspect of keeping things that "don't work" not working. For example, the TCK tests for Java compilers include both positive tests of programs that must be accepted and negative tests of programs that must be rejected. In many circumstances, preserving or expanding the positive behavior is more acceptable and important than maintaining the negative behavior and this guide will focus on positive compatibility.

In terms of relative severity, source compatibility problems are usually the mildest since there are often straightforward workarounds, such as adjusting import statements or switching to fully qualified names. Gradations of source compatibility are identified and discussed below. Behavioral compatibility problems can have a range of impacts while true binary compatibility issues are problematic since linking is prevented.

The basic job of any linker or loader is simple: It binds more abstract names to more concrete names, which permits programmers to write code using the more abstract names. (Linkers and Loaders)

A Java compiler's job also includes mapping more abstract names to more concrete ones, specifically mapping simple and qualified names appearing in source code into binary names in class files. Source compatibility concerns this mapping of source code into class files, not only whether or not such a mapping is possible, but also whether or not the resulting class files are suitable. Source compatibility is influenced by changing the set of types available during compilation, such as adding a new class, as well as changes within existing types themselves, such as adding an overloaded method. There is a large set of possible changes to classes and interfaces examined for their binary compatibility impact. All these changes could also be classified according to their source compatibility repercussions, but only a few kinds of changes will be analyzed below.

The most rudimentary kind of positive source compatibility is whether code that compiles against L1 will continue to compile against L2; however, that is not the entirety of the space of concerns since the class file resulting from compilation might not be equivalent. Java source code often uses simple names for types; using information about imports, the compiler will interpret these simple names and transform them into binary names for use in the resulting class file(s). In a class file, the binary name of an entity (along with its signature in the case of methods and constructors) serves as the unique, universal identifier to allow the entity to be referenced. So different degrees of source compatibility can be identified:

Does the client code still compile (or not compile)?

If the client code still compiles, do all the names resolve to the same binary names in the class file?

If the client code still compiles and the names do not all resolve to the same binary names, does a behaviorally equivalent class file result?

Whether or not a program is valid can also be affected by language

changes.

Usually previously invalid program are made valid, as when generics

were added, but sometimes existing programs are rendered invalid, as

when keywords were added (strictfp, assert, and enum).

The version number of the resulting class file is also an external

compatibility issue of sorts since it restricts which platform

versions the code can be run on.

Also, the compilation strategies and

environment can vary when different platform versions are used to

produce different class file versions, meaning compiler bug

fixes and differences in compiler-internal contracts can affect

the contents of the resulting class files.

Full source compatibility with any existing program is usually

not achievable because of * imports.

For example, consider L1 with packages

foo and bar where foo includes

the class Quux.

Then L2 adds class bar.Quux.

Consider the following program:

import foo.*;

import bar.*;

public class HelloQuux {

public static void main(String... args) {

Object o = Quux.class;

System.out.println("Hello " + o.toString());

}

}

The HelloQuux class will compile under

L1 but not under L2 since

the name "Quux" is now ambiguous as reported by

javac:

HelloQuux.java:6: reference to Quux is ambiguous, both class bar.Quux in bar and

class foo.Quux in foo match

Object o = Quux.class;

^

1 error

An adversarial program could almost always include *

imports that conflict with a given library.

Therefore, judging source compatibility by requiring all

possible programs to compile is an overly restrictive criterion.

However, when naming their types, API designers should not

reuse "String", "Object", and other names of core

classes from packages like java.lang and java.util

to avoid this kind of annoying name conflict.

Due to the * import wrinkle, a more reasonable definition

of source compatibility considers programs transformed to only use fully qualified

names. Let FQN(P, L) be program P

where each name is replaced by its fully qualified form in the context

of libraries L.

Call such a library transformation from L1 to

L2 binary-preserving source compatible with

source program P if FQN(P, L1)

equals FQN(P, L2).

This is a strict form of source compatibility that will usually result

in class files for P using the same binary names when compiled

against both versions of the library.

Class files with the same binary names will result when each type has

a distinct fully qualified name.

Multiple types can have the same fully qualified name but differing

binary names; those cases do not arise when the standard naming

conventions are being followed.

To illustrate the differing degrees of source compatibility, consider

class Lib below.

// Original version

public final class Lib {

public double foo(double d) {

return d * 2.0;

}

}

A change that could break compilation of existing clients is removing

method foo:

// Change that breaks compilation

public final class Lib {

// Where oh where has foo gone?

}

Removing a method is also binary incompatible.

The remaining changes to Lib discussed below will all

preserve binary compatibility.

Adding a method with a name distinct from any existing method is binary-preserving source compatible; the class files produced by recompiling existing clients of the library will be semantically equivalent since the source methods will be resolved the same way.

// Binary-preserving source compatible change

public final class Lib {

public double foo(double d) {

return d * 2.0;

}

// Method with new name added

public int bar() {

return 42;

}

}

However, adding overloaded methods has the potential to change method

resolution and thus change the signatures of the method call sites in

the resulting class file.

Whether or not such a change is problematic with respect to source

compatibility depends on what semantics are required and how the

different overloaded methods operate on the same inputs, which

interacts with behavioral equivalence notions.

For example, consider adding to Lib an overloading of

foo which takes an int:

// Behaviorally equivalent source compatible change

public final class Lib {

public double foo(double d) {

return d * 2.0;

}

// New overloading

public double foo(int i) {

return i * 2.0;

}

}

In the original version of Lib, a call to foo

with an integer argument will resolve to foo(double) and under the rules for

method invocation conversion

the value of the int argument will be converted to a

double through a primitive widening conversion.

So given client code

public class Client {

public static void main(String... args) {

int i = 42;

double d = (new Lib()).foo(i);

}

}

The call to foo gets compiled as byte code into a series

of instructions like, in javap style output:

... 10: iload_1 11: i2d 12: invokevirtual #4; //Method Lib.foo:(D)D ...

The i2d instructions converts an int to

double.

The string "C.foo:(D)D" indicates this is a call to

method foo in class Lib where

foo takes one double argument and returns a

double result.

In contrast, when the same client code is compiled against the

behaviorally equivalent version of the code with an overloading for

foo, the newly added overloading of foo is

selected instead and the argument does not need any conversion:

... 10: iload_1 11: invokevirtual #4; //Method Lib.foo:(I)D ...

The string "Lib.foo:(I)D" indicates the foo

method taking one int argument is selected and there is

no intermediary conversion instruction from int to

double to convert the argument before the

invokevirtual instruction to call the method.

In terms of operations on the arguments and computation of the

results, these two overloadings of foo are operationally

equivalent.

For int call sites, both methods start by converting the

int argument to double.

In the original method, this conversion is done before the method

call; in the new method, the conversion is done inside the method call

before the multiply by 2.0.

Next, the int-converted-to-double is

multiplied by 2.0 and the product returned.

Not all overloaded methods are behaviorally equivalent; some are just

compilation preserving.

For example, consider adding a third foo method that

takes a long argument:

// Compilation preserving source compatible change

public final class Lib {

public double foo(double d) {

return d * 2.0;

}

public double foo(int i) {

return i * 2.0;

}

// New overloading, not behaviorally equivalent

public double foo(long el) {

return (double) (el * 2L;)

}

}

In the previous versions of Lib, a call to

foo with a long argument would resolve to

calling foo(double), in which case the value of the

long argument would be converted to double

before the method was called.

Then inside the body of foo, the value would be

multiplied by 2.0 and returned.

However, with the presence of the foo(long) overloading,

call sites to foo with a long argument will

resolve to calling foo(long) instead of

foo(double).

The foo(long) method first multiplies by 2 and

then converts to double, the opposite order of

operations compared to calling foo(double).

Whether or not the argument value is converted to double

before or after the multiply by two matters since the two sequences of

operations can yield different results.

For example, a large positive long value multiplied by

two can overflow to a negative value, but a large positive

double value when multiplied by two will retain a

positive sign.

This kind of subtle change in overloading behavior occurred with the

addition of a BigDecimal constructor

taking a long argument as part of

JSR 13.

When adding an overloaded method or constructor to an existing library, if the newly added method could be applicable to the same call sites as the original method, such as if the new method takes the same number of arguments as an original method and has more specific types, call sites in existing clients may now resolve to the new method when recompiled. Well-written programs will follow the Liskov substitution principle and perform "the same" operation on the argument no matter which overloaded method is called. Less than well-written programs may fail to follow this principle.

If a new method or constructor cannot change resolution in existing clients, then the change is a binary-preserving source transformation. In binary-preserving source compatibility, existing clients will yield equivalent class files if recompiled. The difference between behaviorally equivalent and compilation preserving source compatibility that is not behaviorally equivalent depends on the implementation of the methods in question. If a new method changes resolution, if the different class file that results has similar enough behavior, the change may still be acceptable, while changing resolution in such a way that does not preserve semantics is likely problematic. Changing a library in such a way that current clients no longer compile is seldom appropriate.

JLSv3 §13.2 – What Binary Compatibility Is and Is Not

A change to a type is binary compatible with (equivalently, does not break binary compatibility with) preexisting binaries if preexisting binaries that previously linked without error will continue to link without error.

The JLS defines binary compatibility strictly according to linkage; if P links with L1 and continues to link with L2, the change made in L2 is binary compatible. The runtime behavior after linking is not included in binary compatibility:

JLSv3 §13.4.22 – Method and Constructor Body

Changes to the body of a method or constructor do not break [binary] compatibility with pre-existing binaries.

As an extreme example, if the body of a method is changed to throw an

error instead of compute a useful result, while the change is

certainly a compatibility issue, it is not a binary

compatibility issue since client classes would continue to link.

Also, it is not a binary

compatibility issue to add methods to an interface.

Class files compiled against the old version of the interface will

still link against the new interface despite the class not

having an implementation of the new method.

If the new method is called at runtime, an AbstractMethodError is thrown; if

the new method is not called, the existing methods can be used without

incident.

(Adding a method to an interface is a source incompatibility that can

break compilation though.)

Binary compatibility across releases has long been a policy of JDK evolution. Long-term portable binaries are judged to be a great asset to the Java SE ecosystem. For this reason, even old deprecated methods have to date been kept in the platform so that legacy class files with references to them will continue to link.

Intuitively, behavioral compatibility should mean that with the same

inputs program P does "the same" or an "equivalent" operation

under different versions of libraries or the platform.

Defining equivalence can be a bit involved; for example, even just

defining a proper equals method in a class can be nontrivial.

In this case, to formalize this concept would require an operational

semantics for the JVM for the aspects of the system a program

was interested in.

For example, there is a fundamental difference in visible changes

between programs that introspect on the system and those that do not.

Examples of introspection include calling core reflection, relying on

stack trace output, using timing measurements to influence code

execution, and so on.

For programs that do not use, say, core reflection, changes to the

structure of libraries, such as adding new public methods, is

entirely transparent.

In contrast, a (poorly behaved) program could use reflection to look

up the set of public methods on a library class and throw an

exception if any unexpected methods were present.

A tricky program could even make decisions based on information like a

timing side

channel. For example, two threads could repeatedly run different

operations and make some indication of progress, for example,

incrementing an atomic counter, and

the relative rates of progress could be compared.

If the ratio is over a certain threshold, some unrelated action could

be taken, or not.

This allows a program to create a dependence on the optimization

capabilities of a particular JVM implementation, which is generally

outside a reasonable behavioral compatibility contract.

The evolution of a library is constrained by the library's contract

included in its specification; for final classes this

contract doesn't usually include a prohibition of adding new public

methods!

While an end-user may not care why a program does not work

with a newer version of a library, what contracts are being followed

or broken should determine which party has the onus for fixing the

problem.

That said, there are times in evolving the JDK when

differences are found between the specified behavior and the actual

behavior (for example 4707389, 6365176).

The two basic approaches to fixing these bugs are to change the

implementation to match the specified behavior or to change the

specification (in a platform release) to match the implementation's

(perhaps long-standing) behavior; often the latter option is chosen

since it has a lower de facto impact on behavioral compatibility.

While many classes and methods in the platform describe the exact

input-output relationship between arguments and returned values, a few

methods eschew this approach and are specified to have unspecified

behavior.

One such example is HashSet:

[

HashSet] makes no guarantees as to the iteration order of the set; in particular, it does not guarantee that the order will remain constant over time.

...[The

iteratormethod] Returns an iterator over the elements in this set. The elements are returned in no particular order.

The iterator algorithm can and has varied over the years. These variations are fully source and binary compatible. While such behavioral differences are fine in a platform release, because of behavioral compatibility they are marginally acceptable for a maintenance release, and questionable for an update release.

Consider two versions of a simple enum representing the crew of the USS Enterprise from the original series, one for the first season:

public enum TosEnterpriseCrew {

JAMES_T_KIRK("Jim"),

LEONARD_MCCOY("Bones"),

JANICE_RAND("Yeoman Rand"),

MONTGOMERY_SCOTT("Scotty"),

SPOCK("Spock"),

HIKARU_SULU("Sulu"),

UHURA("Uhura"); // Any first name for Uhura in TOS is non-canon.

private String nickname;

StarTrekCast(String nickname) {

this.nickname=nickname;

}

public String nickname() { return nickname;}

}

and another for the second season:

public enum TosEnterpriseCrew {

JAMES_T_KIRK("Jim"),

SPOCK("Spock"),

MONTGOMERY_SCOTT("Scotty"),

LEONARD_MCCOY("Bones"),

/* JANICE_RAND("Yeoman Rand"), */ // Only in 8 episodes!

HIKARU_SULU("Sulu"),

PAVEL_CHEKOV("Chekov"), // Introduced in season 2.

UHURA("Uhura"); // Any first name for Uhura in TOS is non-canon.

private String nickname;

StarTrekCast(String nickname) {

this.nickname=nickname;

}

public String nickname() { return nickname;}

}

Compared to the first season, the second season:

Deletes yeoman JANICE_RAND

Adds PAVEL_CHEKOV

Reorders Bones, Scotty, and Spock to better reflect the order of who commands the ship if the Captain and others are unavailable.

These changes have varying source, binary, and behavioral compatibility effects:

Deleting JANICE_RAND is source incompatible, able to break

compilations.

The deletion is also binary incompatible. Besides being observable

via reflection, the deletion affects the behavior of various built-in

methods on the enum, including values and valueOf.

In addition, the deletion will break previously

serialized streams with this constant.

If the original version of the enum had been in a platform release,

deleting an enum constant would be unacceptable for any subsequent

release.

Adding PAVEL_CHEKOV is binary-preserving source compatible.

Likewise, the addition of a new public static final field is binary

compatible.

However, the addition of a new constant is visible to reflection and

alters the behavior of built-in enum methods.

Existing serialized instances continue to work after a new constant is

added.

Adding an enum constant would be fine in a platform release (and may

be acceptable in a smaller release if the API is a standalone

technology).

However, adding a enum constant in a way that preserves the ordinal

position of other constants is preferable since it has greater

behavioral compatibility.

Reordering Bones, Scotty, and Spock is a binary-preserving

source compatible and binary compatible change, but the reordering

changes the behavior of built-in methods, most notably

compareTo.

The behavioral compatibility changes make such a reordering at most

marginally acceptable even in a feature release.

While there are interactions between source, binary, and behavioral compatibility, considering them distinctly is useful for evaluating the compatibility impact of a change. For example, there are changes with each of the four combination of (binary compatible/incompatible × source compatible/incompatible):

Binary incompatible, source incompatible (compilation breaks): delete an existing method in a class

Binary compatible and source incompatible (compilation breaks): add a method to an interface

Binary incompatible, source compatible (compilation preserving): removing superclasses/interfaces of a direct superclass, see the example in JLSv3 13.4.4 Superclasses and Superinterfaces

Source compatible (binary-preserving), binary compatible: add a method with a new name to a final class

To increase orthogonality, the source compatibility of a change could be evaluated only for changes that were also binary compatible.

Besides Java SE APIs, as a platform the JDK exposes many other kinds of exported interfaces. These interfaces should generally be evolved analogously to behavioral compatibility in Java SE APIs, avoiding gratuitously breaking clients of the interface.

Original Preface to JLS

Except for timing dependencies or other non-determinisms and given sufficient time and sufficient memory space, a program written in the Java programming language should compute the same result on all machines and in all implementations.

The above statement from the original JLS could be regarded as vacuously true about any platform: except for the non-determinisms, a program is deterministic. The difference was that in Java, with programmer discipline, the set of deterministic programs was nontrivial and the set of predictable programs was quite large. In other words, the platform provider and the programmer both have responsibilities in making programs portable in practice; the platform should abide by the specification and conversely programs should tolerate any valid implementation of the specification.

Evolving the platform is a balance between maintaining stability to enjoy various kinds of compatibility and making changes to enjoy various kinds of progress.

When discussing the evolution policies of the JDK, the term

"interface" will not only refer to an interface type but

also much more broadly to any means by which one software component

interacts with another software component or with a human.

This notion of interface covers Java SE APIs, command line options,

file locations, file formats, network protocols, GUIs, and many other

observable aspects of the JDK.

An interface is defined by its specification. A specification consists of syntax, the structural properties of an interface such as names and access modifiers, as well as semantics, the meaning of what the interface is defined to do. Typically the semantics of a Java SE API are given by the text of the Javadoc for the API.

For example, the Javadoc of AbstractList.indexOf,

provides structural signature information (a method named "indexOf" in

a class named "java.util.AbstractList" having the "public" access

modifier, accepting one Object parameter and returning an

int) as well as semantic information, "Returns the index

of the first occurrence of the specified element in this list,...".

For this particular method, the specification also includes information

about how the method is implemented, "This implementation first gets a

list iterator (with listIterator()).

Then, it iterates over the list until the specified element is found

or the end of the list is reached."

A change to the structural properties defined by a specification or the semantics of specification is a change to the specification. For example, changing the access modifier would be a structural specification change. However, the text of a specification may change without changing the semantics of the specification. For example, just rephrasing the sentence "Returns the index..." without changing its meaning would not be a specification change. On the other hand, materially changing the required implementation and in tandem updating the corresponding description, "This implementation...", would be a specification change.

An official interface of the JDK is supported and usable by

general users.

These interfaces have an evolution policy that can be relied on for

long-term availability.

Many of the official interfaces, primarily Java SE APIs, are standardized

through the Java Community Process

(JCP) or other standards body, such as the Object Management Group

(OMG) or the World Wide Web Consortium (W3C).

Other official interfaces are associated with the JDK implementation

and not a standards body.

For example, the com.sun.java.swing.plaf package and its

subpackages are not part of the Java SE platform

specification but are supported classes in the JDK.

Likewise, the javac

tree API is a supported part of the JDK.

While, the tree API is

related to the standardized

language model from JSR 269, Pluggable

Annotation Processing API, it is not part of JSR 269 or

any other JSR.

Other official interfaces of the JDK cover aspects of the platform such the names of the commands to run the virtual machine or compiler and the names of the options taken by those commands. Since such details are not part of the Java SE platform specification, the Java Compatibility Kit (JCK) tests include an interview process to provide a level of indirection where implementation-specific values can be specified.

All interfaces that are not official are unofficial. Unofficial interfaces may have different evolution policies than official interfaces since unofficial interfaces are generally not supposed to be relied upon by general users. There are several classes of parties to JDK evolution policies:

Prior to JDK 6, the policy of

general users not relying upon

certain unofficial APIs lacked an enforcement mechanism.

Starting in JDK 6, the use of unofficial types that were

public more by historical accident than by explicit

design started generating warnings like:

warning: sun.Foo is Sun proprietary API and may be removed in a future release

To provide binary compatibility to existing programs using these types, the proprietary APIs are still fully available at runtime. However, going forward unofficial APIs added after JDK 6 may have stronger enforcement mechanisms such that unofficial APIs are not usable either at compile time or at runtime. In particular, new JDK-internal interfaces should not be expected to be usable from outside of the JDK.

Changes to official interfaces typically need to go through review processes over and above standard code reviews; check release-specific documentation for process details.

An interface is exported by the component whose specification defines

the interface.

For example, the Java SE platform specification exports APIs in

java.* and javax.*.

The JDK implements the Java SE platform specification and exports one

realization of that specification.

An interface is imported by a component which uses the interface but

which cannot extend or modify it.

The Java SE platform specification imports various interfaces, such as

Requests for Comments (RFCs) defined by the Internet Engineering Task

Force (IETF).

The JDK implementation also imports interfaces for the purposes of the

implementation, such as system calls and standard libraries.

For the purposes of tracking dependencies, adding new imported

interfaces may trigger additional review procedures.

The developer or group of developers working on a change, from a large feature to a small bug fix, have primary responsibility for the quality of the change and for the consequences (good or bad!) of adding the change to the platform. Part of ensuring quality is judging that a change's benefits justify the change's costs in development, specification, and testing. The various review processes that are in place in a given release are intended to assist and supplement the engineers' primary responsibility and help ensure a beneficial outcome.

Concerns for a developer to keep in mind when working on a change for the JDK include but are not limited to:

General appropriateness. Is the change a good fit for the platform? Is the general evolution policy being followed?

Good specifications.

Specifications are ideally simultaneously clear, concise, and correct.

Specifications of JCP mediated APIs should also include testable

assertions suitable for conformance testing.

Behavior on null inputs should be clear.

As APIs are added, correct @since tags should be included

as part of the specification.

Conventions. Are naming conventions and other coding conventions being followed? Are contemporary language features being employed wisely? (Basic code conventions are discussed in Code Conventions for the Java™ Programming Language, but that document has not been updated to cover Java SE 5.0 language features and other evolutions in recommended coding style.)

Code hygiene.

Beyond coding conventions, source code should be free of warnings from

the compiler, including javadoc warnings when the

documentation is generated.

Beyond the warnings emitted by default, the javac

-Xlint facility will enable additional recommended

warnings.

Spurious warnings should be suppressed using SuppressWarnings

annotations.

Other static analysis tools like FindBugs can be worthwhile to run as well.

Efficiency. Efficient code is important, but remember that "We should forget about small efficiencies, say about 97% of the time: premature optimization is the root of all evil" —Don Knuth. When trying to measure efficiency, beware meddling with micro-benchmarks! Popular JVM implementations are dynamic environments which make measuring small performance differences with micro-benchmarks a subtle task, quick to cause anger. Also, between several possible alternative codes, the alternative which performs best on a particular JVM implementation may not perform best on other JVM implementations of interest.

Awareness of multi-threading and concurrency. The platform has always allowed for the possibility of multiple threads of control. APIs need to be aware of this possibility and should state whether or not they can be safely used in a multi-threaded fashion.

Security practices. Security is good. Insecurity is not. Secure coding guidelines need to be followed.

Adequate testing. Writing regression tests is an integral part of JDK development.

Cross-platform. Write cross-platform code if you can; write platform specific code if you must. Prefer writing solutions to problems in the JDK in the Java language compared to other languages, C, C++, shell, and so on; these preferences extend to writing regression tests. Note that for C and C++ code, the sources must compile successfully under many different compilers, making cross-platform building and testing especially important.

Proper code review. Reviewers sufficiently knowledgeable about the affected areas of the code base need to be marshaled. Large or risky changes may require multiple reviewers in addition to the developer of the change.

Code reviews are the most effective and most cost-effective policy to maintain the quality of the JDK code base. Besides double (or triple or quadruple ...) checking a particular change, code reviewing is a team activity which broadens familiarity of the code base among more people, increasing resilience to hostile buses or trucks.

All changes, even trivial ones, need code reviews.

The proper depth and extent of a code review depends on the depth and extent of the change. Trivial changes often merit only trivial code reviews. However, not all small changes are trivial. The basic responsibility of a code reviewer is to verify the coding guidelines are being followed and to suggest improvements. Being a code reviewer entails sharing responsibility for the change, including helping resolve any problems if the responsible engineer is unavailable.

While reviewing patch files is adequate for small changes, larger changes are often facilitated by use of webrev. The webrev script produces a set of HTML pages providing multiple views of the change. The change is presented as various flavors of diff output as well as original and new versions of each modified file.

Thou shalt write regression/unit tests to accompany thy JDK

changes unless there is good reason not to.

JDK regression tests are written using the jtreg

harness.

Automated tests are preferred over manual ones.

Appropriate testing techniques and approaches will vary by technology

area.

Generally other kinds of tests complement and not replace the need for

regression tests.

The general policy for several feature releases is that core JDK

components are only marked as deprecated if they are

actively harmful.

If using a class or method is merely ill-advised, that is usually not

sufficient to earn the deprecated mark.

When an API element is deprecated, the recommended

practice is to both apply the @Deprecated

annotation as well as use the "@deprecated"

javadoc tag.

Using an annotation to formally indicate deprecation declares the

semantics of the code in the code itself, as opposed to the previous

practice before annotations were available of marking deprecation with

a comment.

Using a paired @deprecated javadoc tag for informative

purposes allows the deprecation to be explained and any alternate

functionality recommended.